Hi everyone.

I have some questions about a paper.

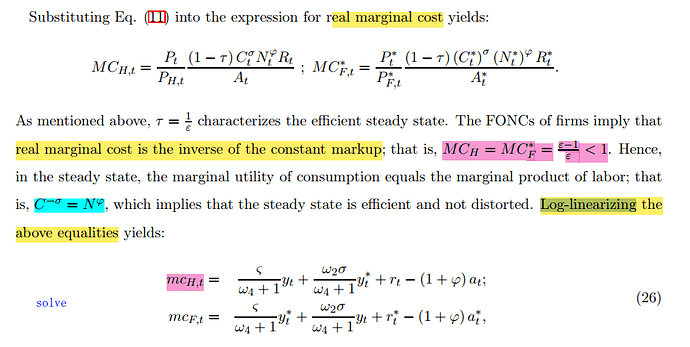

- Could anyone tell me why the equation C^{-\sigma}=N^\varphi [colored in blue in the following picture] is established? What happened to P, R, and A?

I think in the steady state, R and A will stay at 1 and P_t/P_{H,t}=1, but I am not sure.

- Will the Log-linearizing version of MC equal to zero and the Log-linearizing version of A become zero, too? Because MC and A become a constant illustrated above. If I am wrong, what will happen to equation (26) insteady state?

Thanks for any suggeations! Best wishes to you!

Here is the paper:

3.Tae-Seok, Eiji, 2013, Productivity shocks and monetary policy in a two-country model, Dynare working papers.pdf (348.3 KB)

Thank you for your reply, professor jpfeifer!

Here are my thought and question:

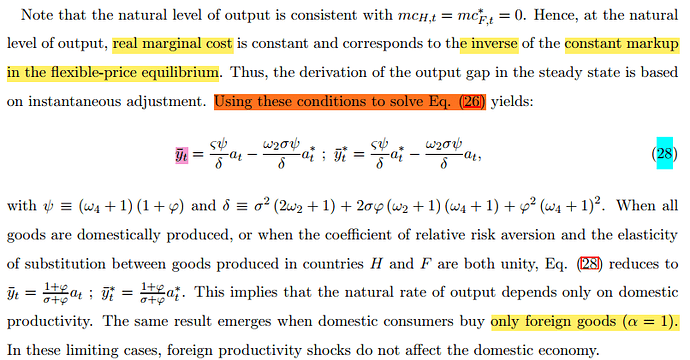

1.The Log-linearizing version of R is an endogenous variable, so I think the same thing happened to R as A and P,so R will stay at 1. I am not sure, but I think so.

According to the papper: 1/R(t) = Q(t,t+1) = C(t+1)^(-sigma)*P(t) / (P(t+1)*C(t)^(-sigma))

2.During the computations from (26) to (28), I also feel confuse about what happened to r(t), it is disappeared. I can get the numerator of (28) without the help of r(t), so I guess is combined with y(t) and delte, but I have no idea of how to do that. Could you please offer me some suggeations to eliminate r(t) and get delte?

Once again, thanks for your help many times!

To be honest, I am still confused about which fomulars in the model should be posted in dynare, so I can only follow the paper’s idea to learn something about the relationship between dynare code and model.

And I find a easier fomular which can be used to repalce the fomular(28) in dynare to solve this problem.

Thanks for your help! Best wishes to you!